allow_unreachable=True) # allow_unreachable flag RuntimeError: element 0 of tensors does not require grad and does not have a grad_fn - PyTorch Forums

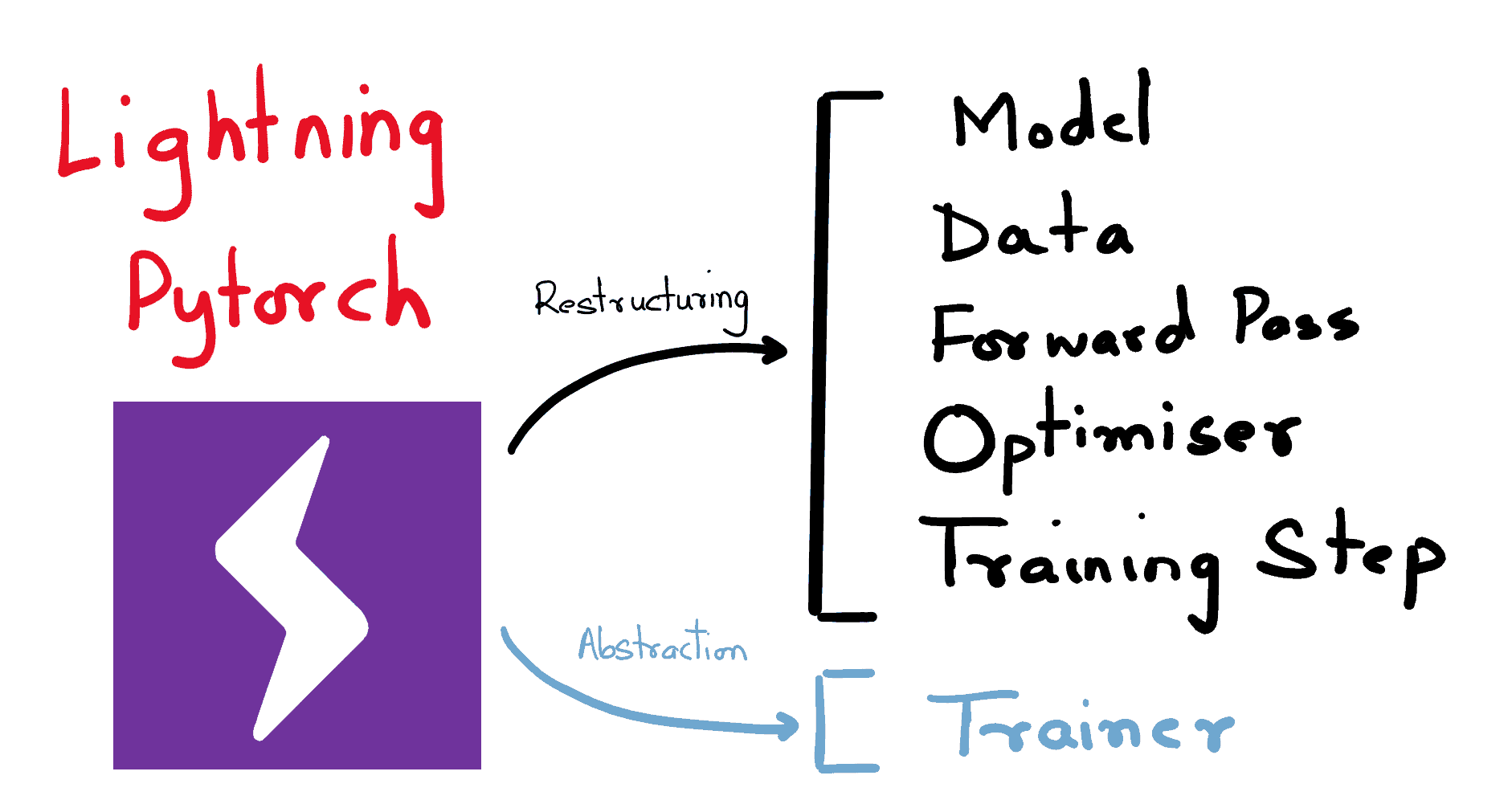

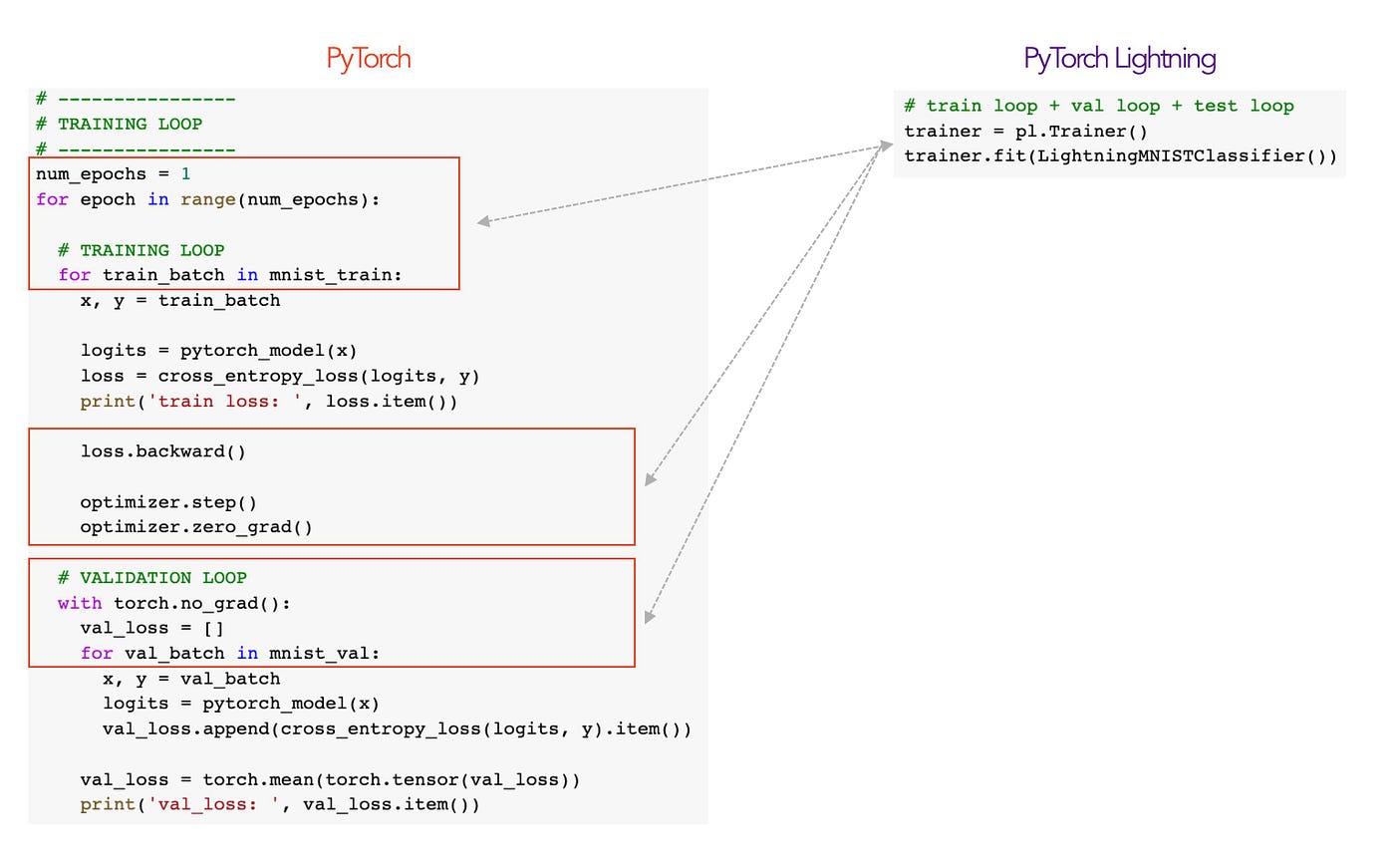

From PyTorch to PyTorch Lightning — A gentle introduction | by William Falcon | Towards Data Science

trainer.fit() stuck with accelerator set to "ddp" · Issue #5961 · PyTorchLightning/pytorch-lightning · GitHub

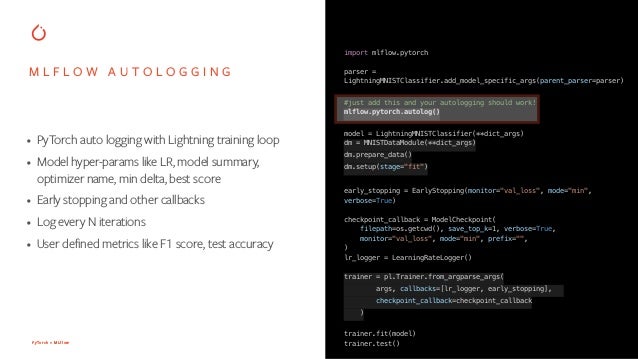

From PyTorch to PyTorch Lightning — A gentle introduction | by William Falcon | Towards Data Science

MisconfigurationError: No TPU devices were found" even when TPU is connected in PyTorch Lightning - Stack Overflow

neptune.ai - Neptune logging was just added to PyTorch Lightning (lightweight PyTorch wrapper). Really easy to use: trainer = Trainer(logger=NeptuneLogger(...)) Check how to use it: https://buff.ly/2TAZdJ8 #MachineLearning #DeepLearning | Facebook

Accessible Multi-Billion Parameter Model Training with PyTorch Lightning + DeepSpeed | by PyTorch Lightning team | PyTorch Lightning Developer Blog

abhishek on Twitter: ""Tez: A Simple PyTorch Trainer" now has 300 stars ⭐️ on GitHub!!! Thank you, everyone!!! Simplicity is the best! Check it out here: https://t.co/BxKAJkeGh8 https://t.co/o2L6lRM6pb" / Twitter

8 Creators and Core Contributors Talk About Their Model Training Libraries From PyTorch Ecosystem - neptune.ai

PyTorch Lightning 1.3- Lightning CLI, PyTorch Profiler, Improved Early Stopping | by PyTorch Lightning team | PyTorch | Medium